What is AsterTrack?

AsterTrack is a custom multi-camera system designed to track markers and targets in 3D space for a variety of purposes like virtual reality and motion capture.

This so-called marker-based optical tracking is common in professional studios, but typically costs several thousands of euros for even the most basic setup.

AsterTrack implements the same concept with much less expensive hardware, and tries to pioneer a user-friendly multi-camera tracking experience.

It aims to be as accurate as the best consumer VR tracking systems, with similarly low latency, while being very affordable for what it does.

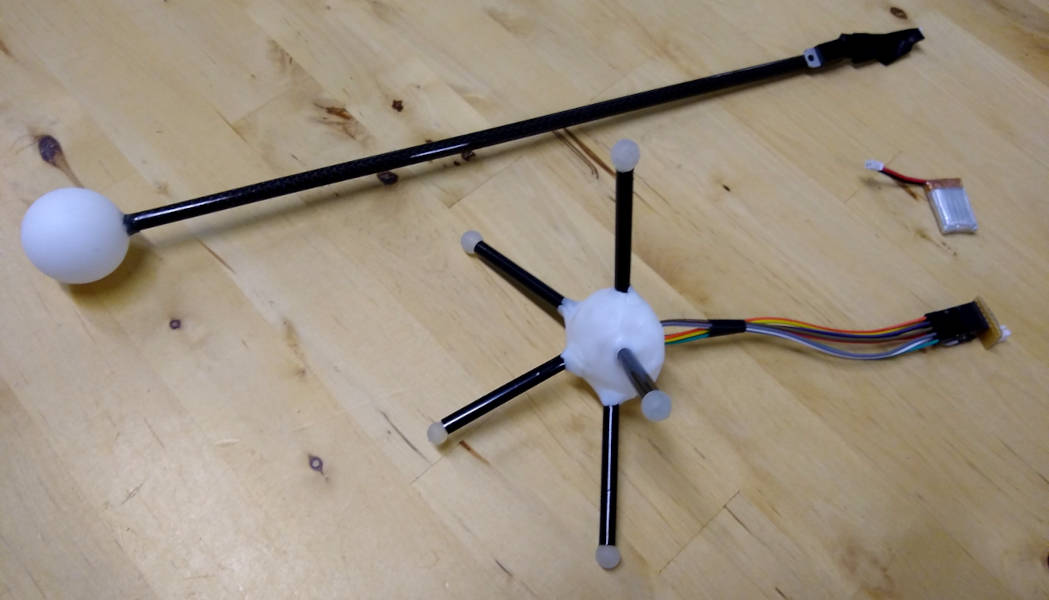

Targets can be anything you can attach retroreflective markers to, even 3D prints or existing objects without a battery.

This allows them to be much lighter, cheaper, and more versatile than any other type of tracker.

Notably, AsterTrack does not just rely on triangulation, but fully supports the use of flat marker targets, setting it apart even from most professional optical tracking systems.

This makes tracked objects much less intrusive as it does not require attaching big spherical markers, which would be impractical in consumer VR applications.

How does it work?

There are three main components of an AsterTrack tracking system:

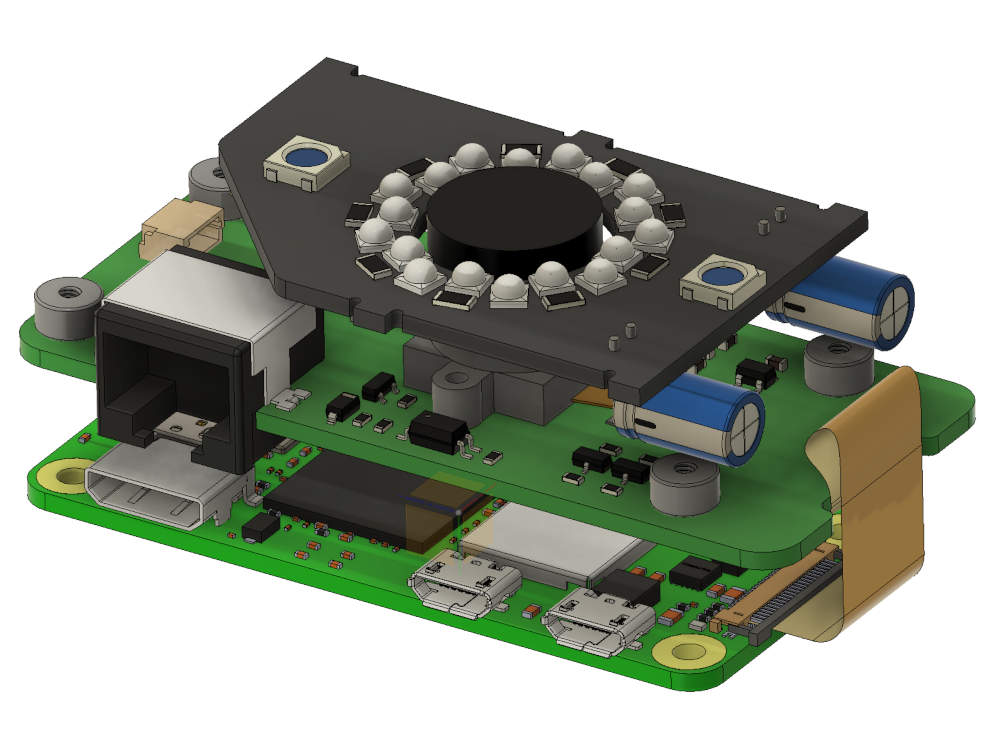

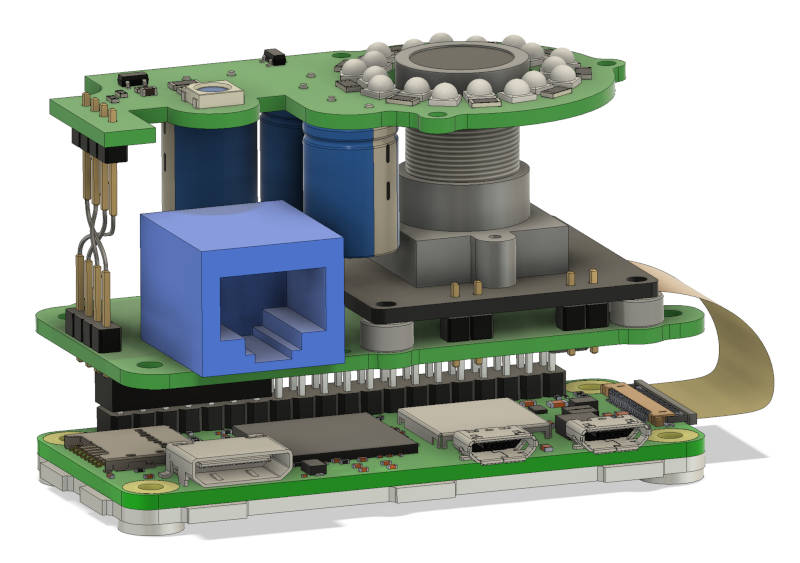

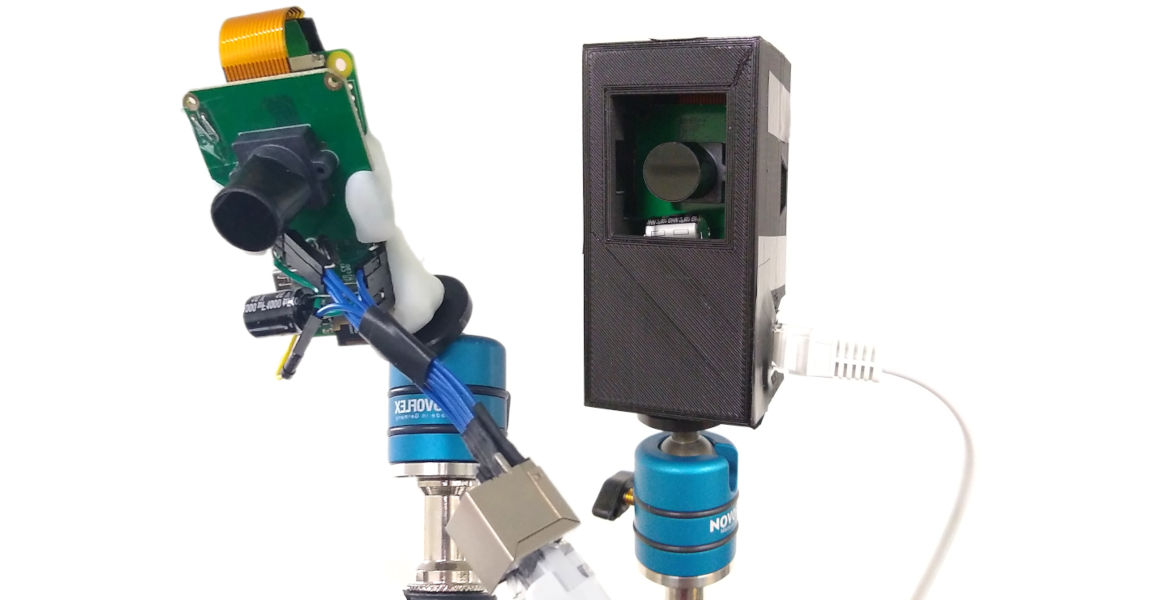

AsterTrack Cameras: The tracking cameras that detect markers using onboard processing on the Raspberry Pi

- this greatly lowers the burden on the host system for processing and data throughput, enabling a higher quality of tracking, and much more of it.

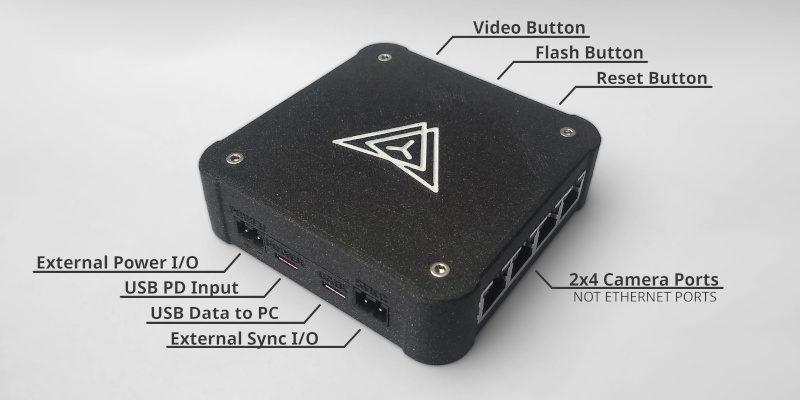

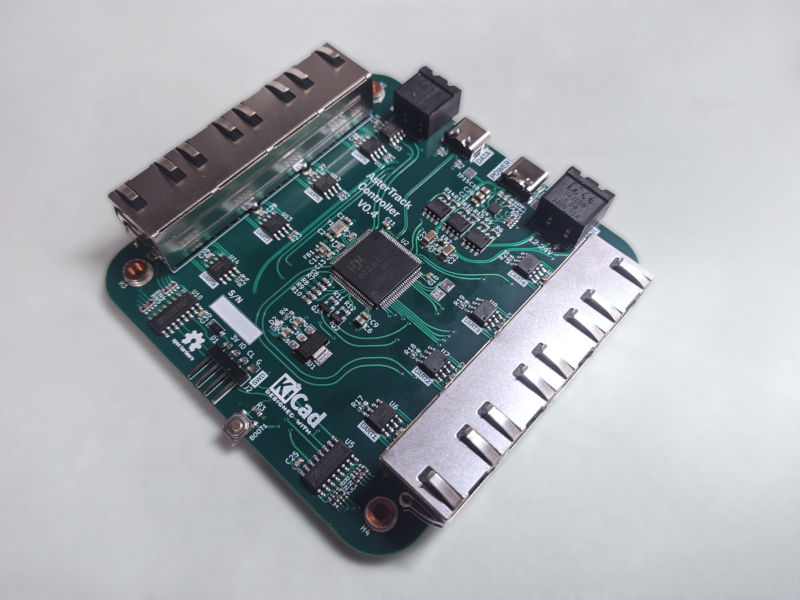

AsterTrack Controller: The hub that synchronises the cameras and connects them to the host system via USB,

ensuring maximum stability for wired cameras and a good user experience.

AsterTrack Server: The software that performs the tracking, turning the marker observations into usable 3D tracking data for use in external applications.

Unique sets of such markers can be calibrated and tracked as targets, enabling rotational tracking without an IMU by associating the markers between frames and multiple calibrated cameras. Full support for IMUs is very much planned to aid in occlusion resistance in smaller setups, especially for VR use.

If you're interested in more details, check out our documentation on the hardware design and system architecture.

Current State

The base multi-camera experience of AsterTrack is solid and ready for use, though some usability improvements can still be made.

The rest depends heavily on the use case:

As a tracking system originally designed for consumer VR use, the support of flat marker targets and 6-DOF tracking in general is well developed.

But to truly be usable for VR, the IMU integration needs to be completed, and common trackers designed for 3D printing.

IMU integration will also help track small trackers more reliably.

For use cases relying on a triangulated point cloud of markers, this is still being developed - both the frame-to-frame labelling of markers as well as integrations like C3D export.

The hardware is in the process of receiving its final major iteration, adding protections, more mounting points, and support for future wireless features.

Once it is ready, it will be available as a dev kit, and DIY versions are planned as well.

Roadmap

Out first priority is getting the dev kits ready for early adopters that already know what they need an optical tracking system for.

In the leadup to this, we will work on integrations into third-party software used for MoCap and VR alike.

But for true VR use, we will need to complete the IMU integration, both for existing VR hardware via monado, and custom IMU trackers.

This is our next priority, and involves making retrofitting existing VR hardware as user-friendly as possible, and designing premade VR-ready designs for, among others, DIY HMDs, Controllers and FBT.

Once the VR use case is fleshed out and ready for day-to-day use, we intend to move from small batches to a crowdfunding-supported consumer version.

Recording with three trackers: A Reverb G2 HMD and its Controller (depicted above) as well as a Plastic Model Gun.

All using flat markers applied to existing geometry.

All Hardware Design after 2021 by JX35

2024

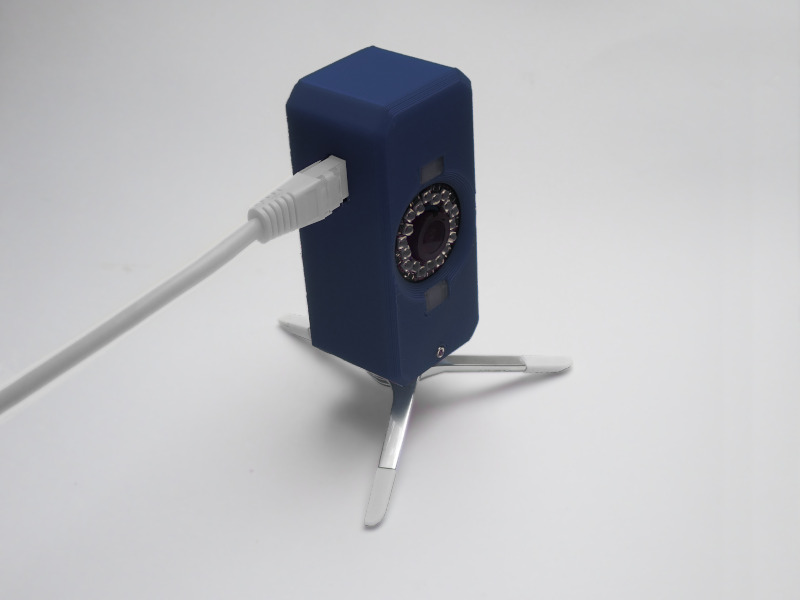

Prototype Tracking Controller V0.4.2 in new case

2023

Prototype Tracking Camera V0.3 with most final capabilities

Prototype Tracking Controller V0.4 supports 8 cameras with USB-PD, USB 2.0 HS, and Sync & Power IO for larger setups

2022

Prototype Tracking Camera V0.2 with improved case design

Prototype Tracking Camera V0.2 has ESD protections, uses RS-422, a smaller camera module and more LEDs

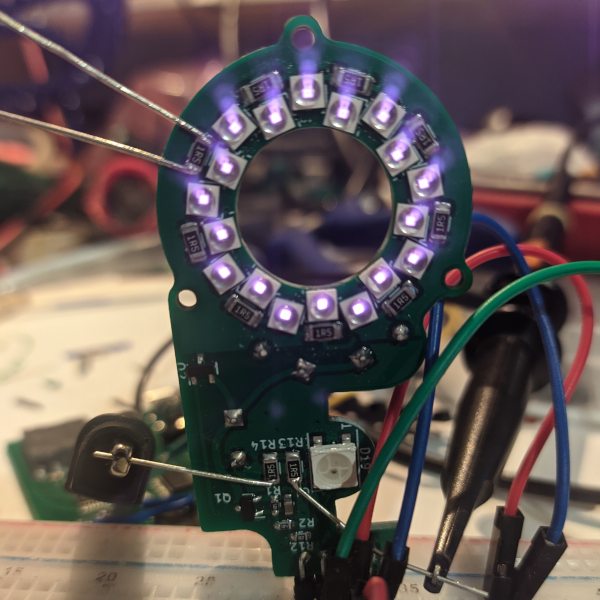

Prototype Tracking Camera V0.1 uses custom PCBs and adds support for passive markers

2021

Early Prototype Tracking Cameras with minimal wiring and components

Early Target and Calibration Wand made from active markers (Infrared LEDs)

2019-2020

First proof-of-concept hardware, including Tracking Camera (Marker Detector) and Tracking Controller (Marker Tracker)